Experiential Learning and Autonomous Correction

Motivation

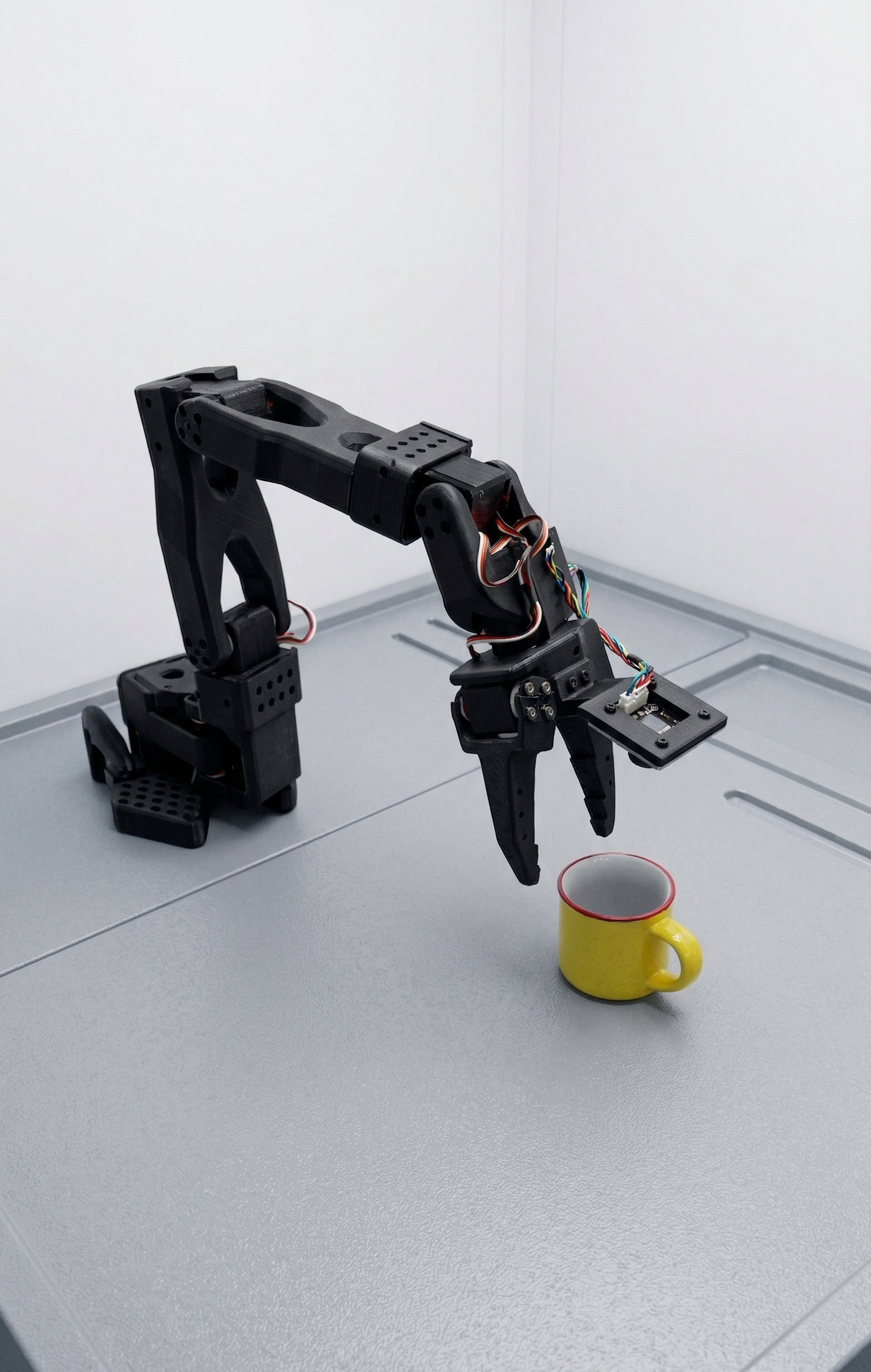

While behavioral cloning provides a baseline, it often results in brittle robot behaviors that fail under unforeseen conditions. Effective real-world applications require systems that continuously learn from their experiences, adapting and correcting autonomously when faced with errors. Developing experiential learning methodologies will enable robotic systems to progressively refine their behaviors, leading to robust, reliable performance even in highly variable environments.

Research Direction

Our research focuses on advancing reinforcement learning frameworks, particularly advantage-conditioned policies, enabling robots to learn actively from successful demonstrations and their own operational failures. This method fosters continuous improvement and adaptability, enhancing error detection, diagnosis, and correction autonomously. Ultimately, we aim to deliver robust robotic systems capable of self-improvement through experience-driven learning.