Visual and Tactile Sensor Fusion

Motivation

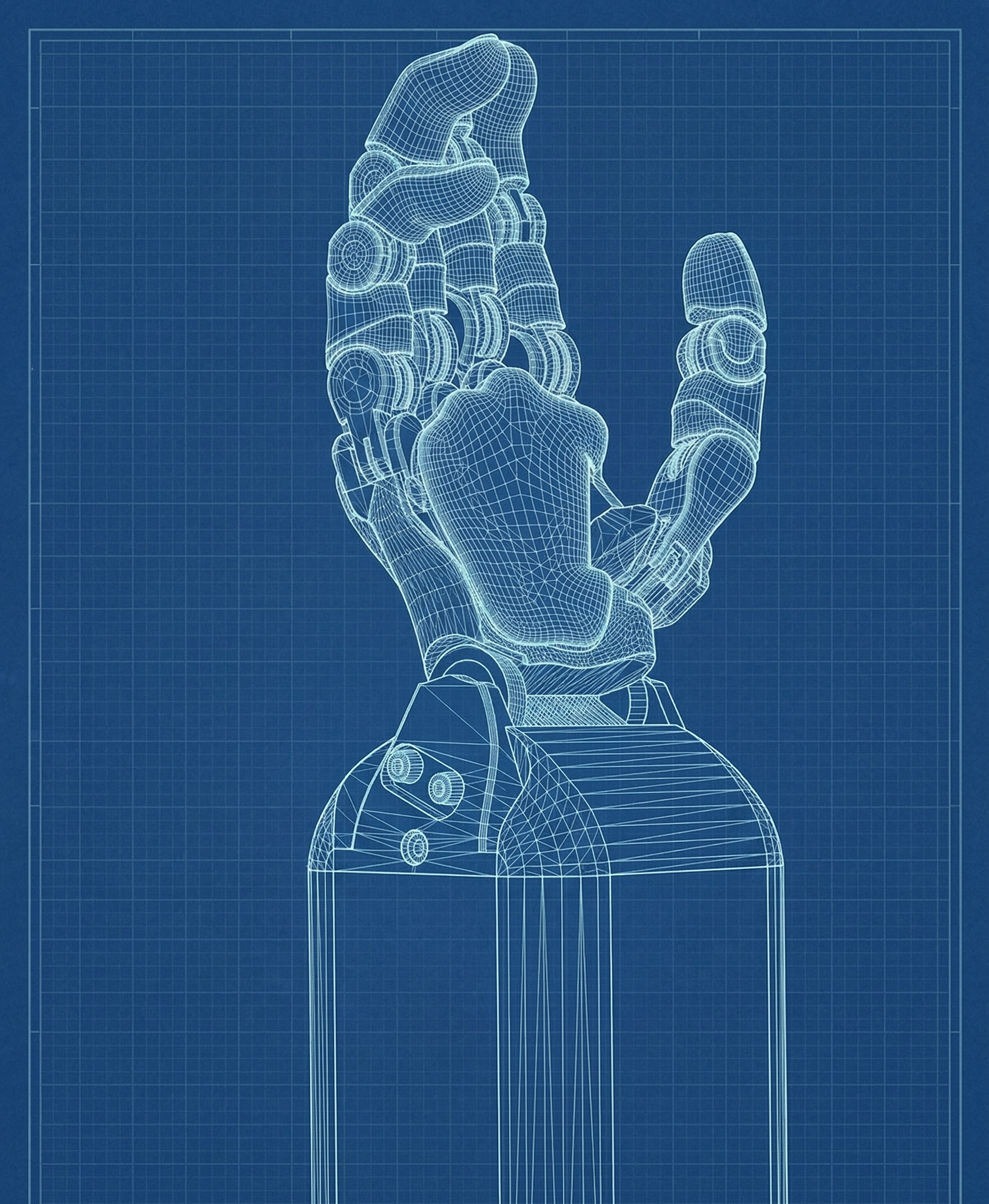

Robotic manipulation tasks often face challenges where vision alone is insufficient, especially in occluded or contact-rich scenarios. Integrating tactile sensing provides a significant advantage, enabling robots to operate effectively in scenarios where purely visual feedback is inadequate. Achieving proficient visual-tactile integration is essential to advance robot dexterity and manipulation skills in complex tasks and environments.

Research Direction

Our research explores the fusion of high-resolution tactile sensing with visual inputs, aiming to develop “blind dexterity” capabilities. By creating models capable of seamlessly integrating optical and tactile information, we strive to improve robotic manipulation in contact-intensive tasks, especially under visual occlusion. This sensory integration promises substantial advancements in precise, reliable robotic interactions across diverse applications.